Heuristics - SOLUTION

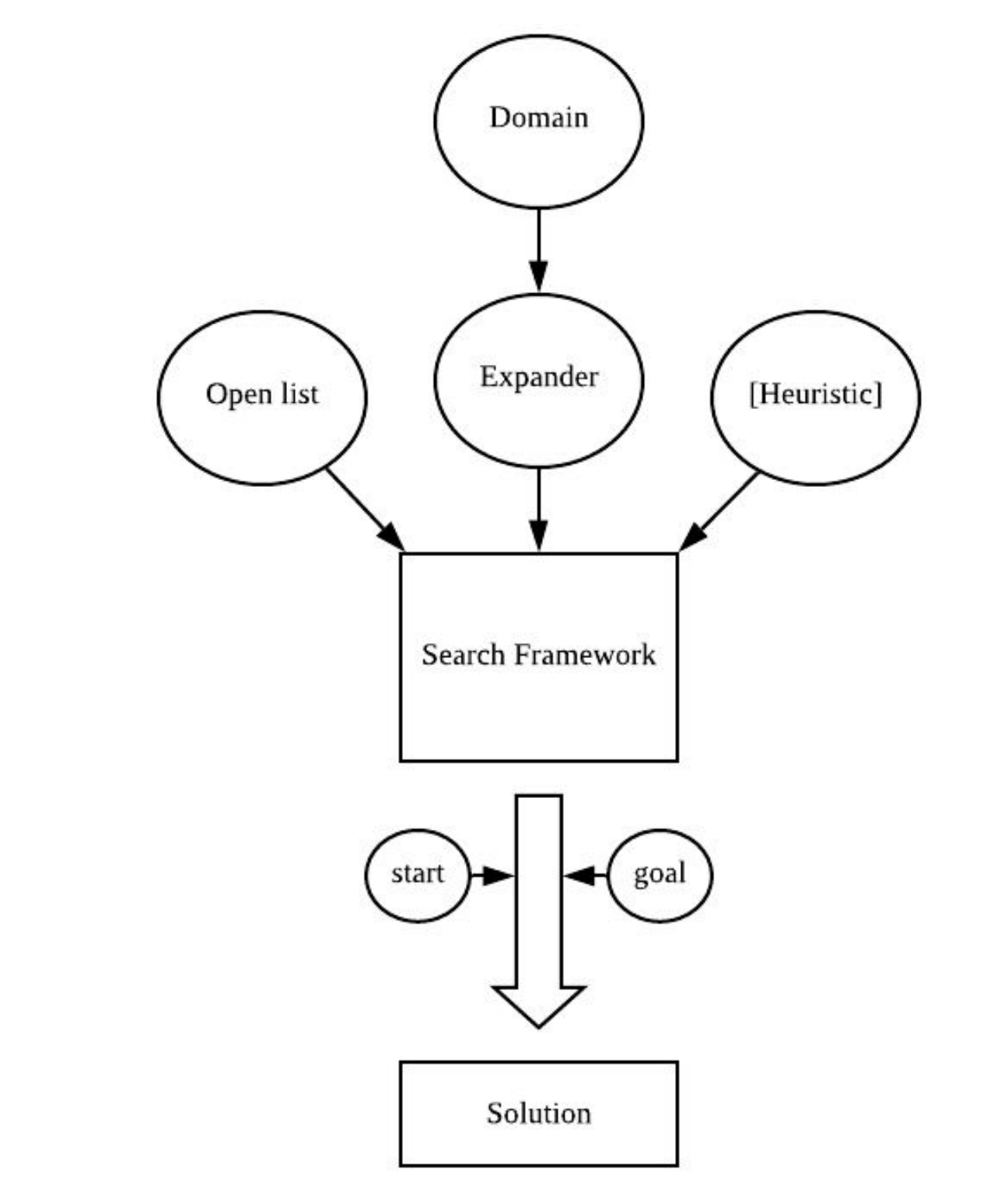

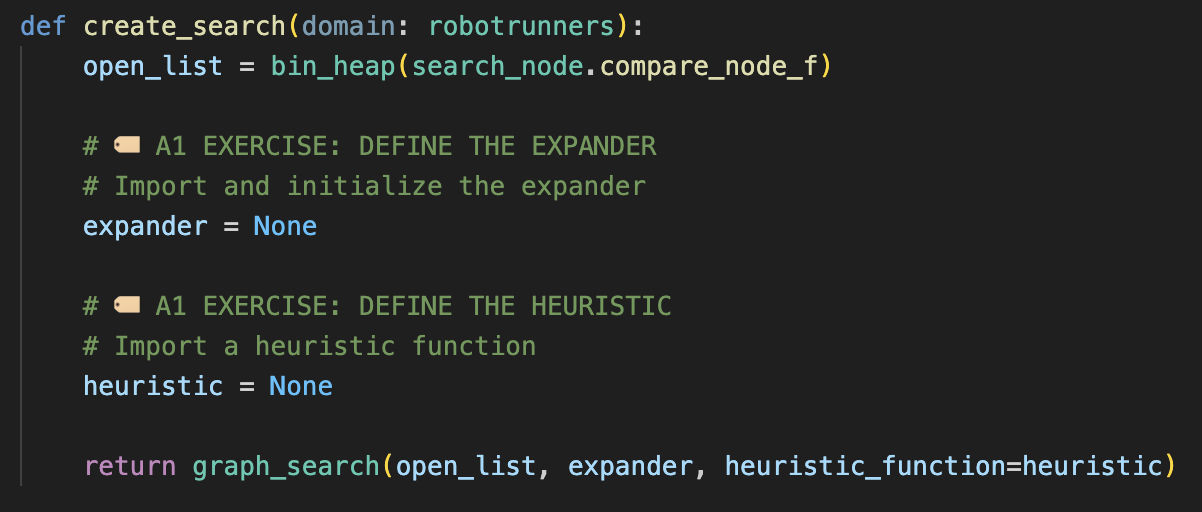

Creating the search

Time to put your search together!

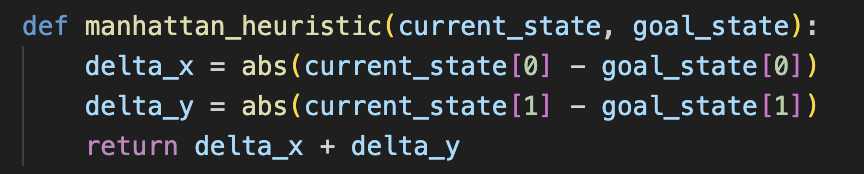

In a1/ex3_create_search.py, you'll find the scaffolding for a search engine. Modify it to use the manhattan distance heuristic and the expander you implemented in the previous exercise:

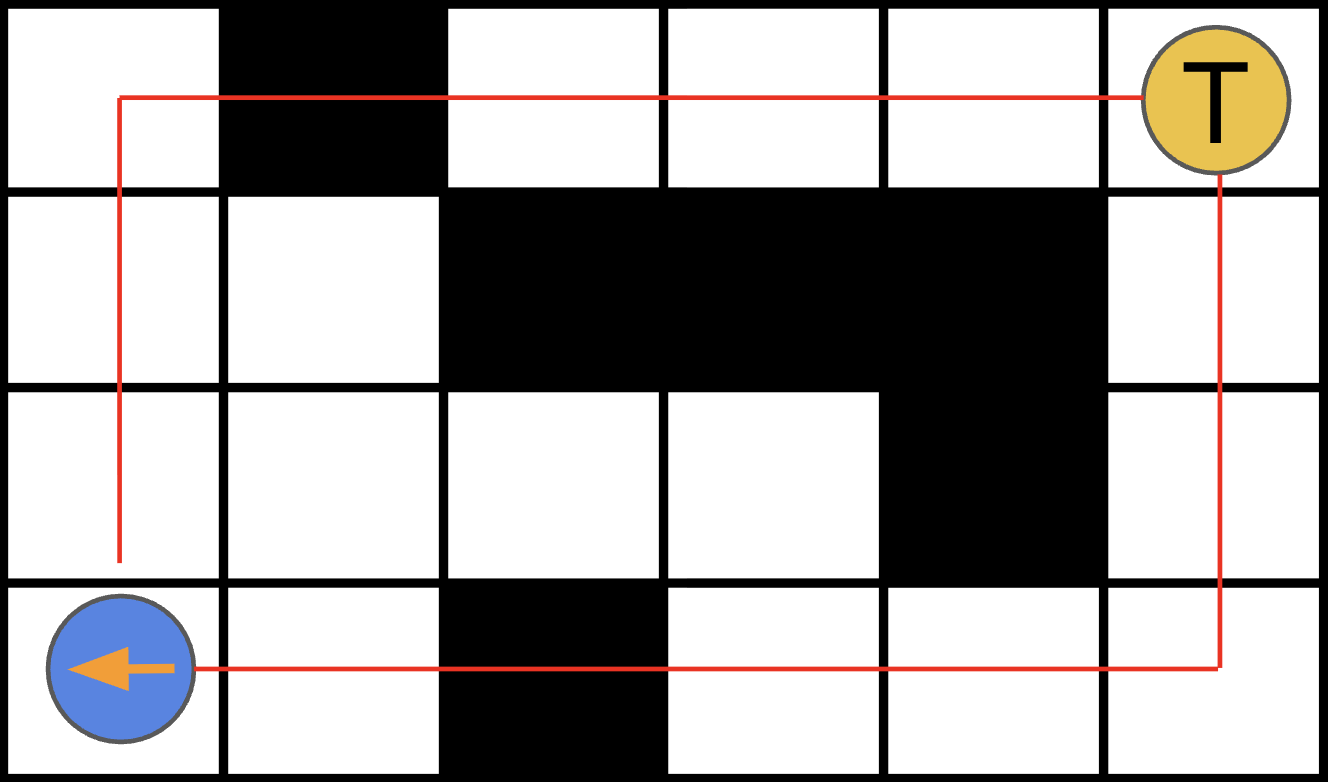

Run your Code

Run the start kit with one agent:

opss25-lifelong --inputFile example_problems/random/random_1.json -s 100

Then, visualise your solution:

opss25-planviz --plan=output.json --map=example_problems/random/maps/random-32-32-20.map

If on Apple Silicon, you may need to run source activate base from your bash shell.

How to Choose a Heuristic?

Not all heuristics are equal. What do we want from a heuristic?

Improving our Heuristic

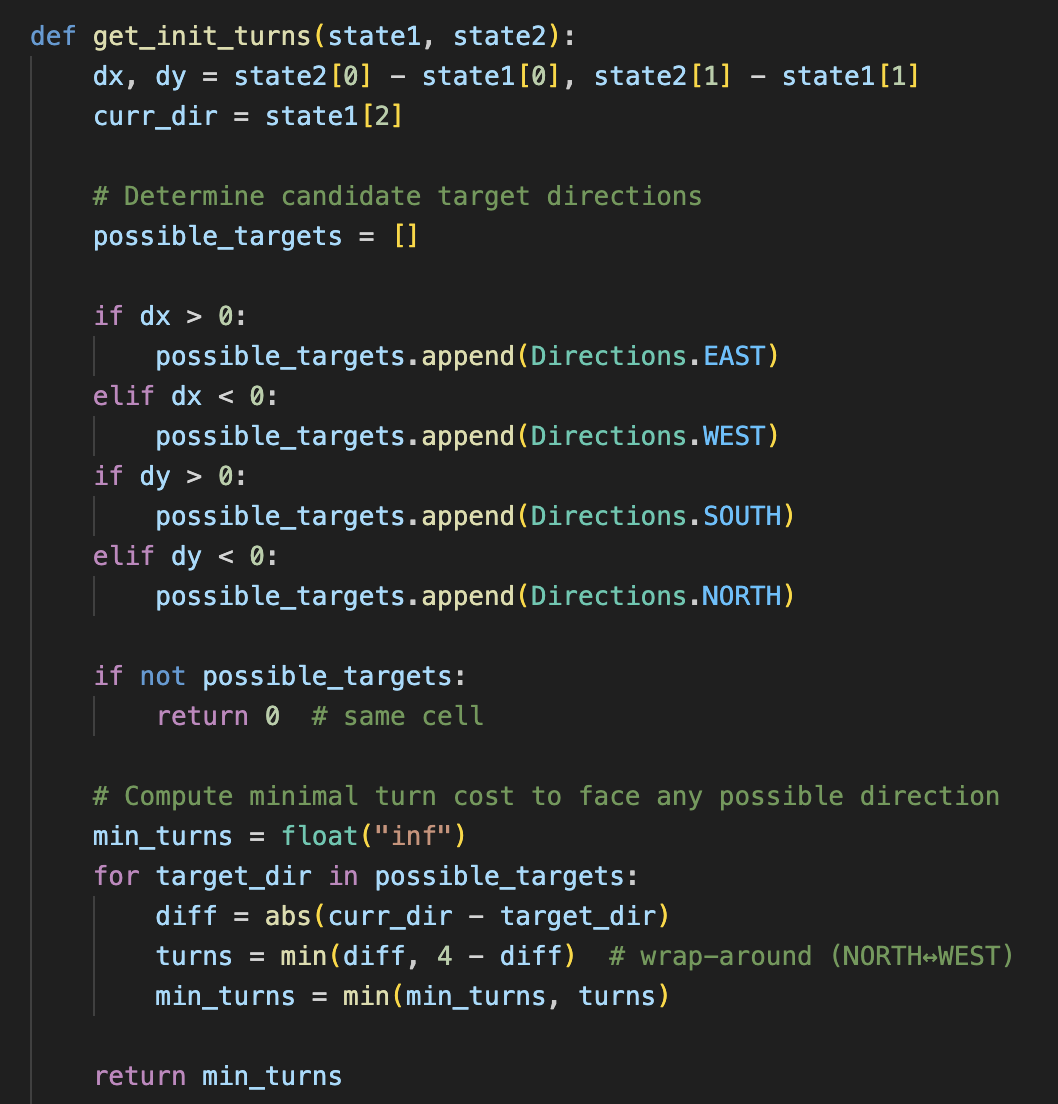

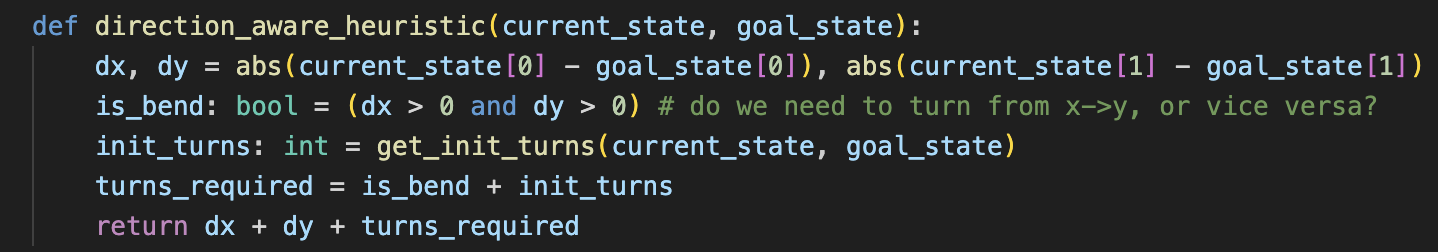

Task: Modify the manhattan distance heuristic to be direction-aware.

We have provided a template for get_init_turns to help calculate the number of turns that may be required for an agent to face in one of the heuristic-recommended directions.

Hint: agents may require some number of turns to face the correct direction, and then may need to bend their path.

Improving our Heuristic - SOLUTION

Congrats on completing the first exercise!

You should now have a working A* search.

What's next?

-

Check out the

ex4_basic_planner.pyfile. It contains the structural code that runs your search engine in the context of the robot runner start kit. -

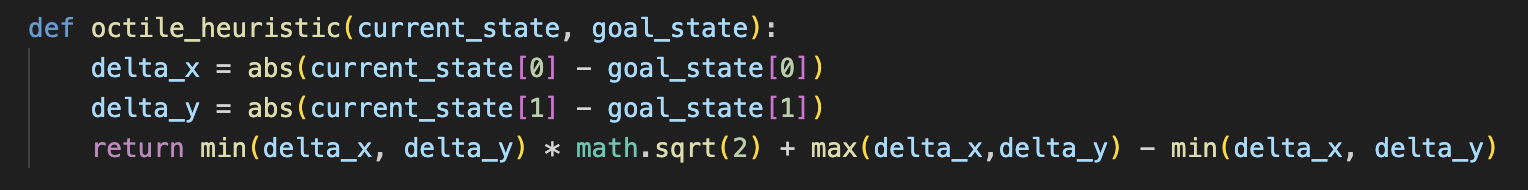

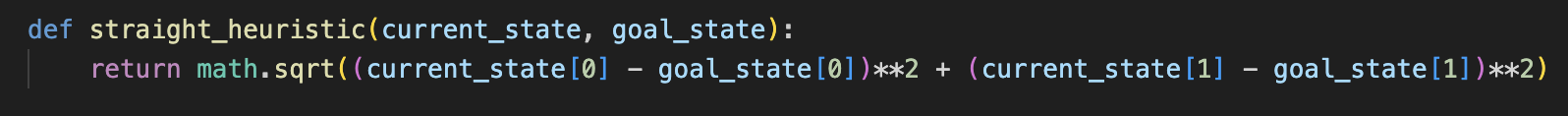

Experiment with various heuristics.

-

Try creating a visualisation using Posthoc.

Arc 2: Dealing with Agent Collisions

We now have a working low-level search! Agents can get where they need to go.

But agents are not alone. Agents must plan paths in a shared environment.

Switch to the a2 branch to continue. We have already filled out the expander from a1 for you.

Let's see what happens when we plan paths for two agents.

Run and observe the output:

opss25-lifelong --inputFile example_problems/random/random_2.json -s 500

opss25-planviz --plan=output.json --map=example_problems/random/maps/random-32-32-20.map

Dealing With Agent Collisions - Part A

Evidently, we have an issue now. Agents must learn to avoid each other!

In this Exercise, we will work on implementing a way of tracking locations that agents occupy in order to avoid collisions. We call this data strcture a reservation table, and it has two main methods:

reserve(state)(reserves a specific location for an agent),is_reserved(state)(which checks if a location is reserved)

In your code, you will see template functions for these two functions. It is your task to complete the implementation. You are safe to assume that the class has an inbuilt vertex_table, which is a 2D array indexed by [x][y].

Dealing With Agent Collisions - Part B

You will also need to complete this implementation---agents need to reserve their location in our high-level planner! To do so, you will need to:

- modify your

expanderso that it does not generate new states if there is a collision! - modify the

high-level plannerto make agents reserve their path. We can call the low level planner every single time step to make things easier.

Test your implementation (what looks different?):

opss25-lifelong --inputFile example_problems/random/random_2.json -s 100

opss25-planviz --plan=output.json --map=example_problems/random/maps/random-32-32-20.map

Reservation table - SOLUTIONS

def reserve(self, *states: list[robotrunners_state]):

for state in states:

x, y, *_ = state

self.vertex_table[x][y] = True

def is_reserved(self, state: robotrunners_state):

x, y, *_ = state

return self.vertex_table[x][y]

Checking reservation table - SOLUTION

def expand(self, current):

self.succ_.clear()

for a in self.get_actions(current.state_):

new_state = self.move(current.state_, a.move_)

if self.reservation_table_.is_reserved(new_state):

continue

self.succ_.append((new_state, a))

return self.succ_[:]

Reserve paths - SOLUTION

def plan(

env: MAPF.SharedEnvironment,

paths: list[list],

last_did_error: bool = False,

):

table.clear()

for i in range(len(paths)):

paths[i] = run_search(env, i)

# Check if we've got a solution

if paths[i]:

# Reserve all points on the path

table.reserve(interop.get_agent_state(env, i), *paths[i])

return paths

Dealing With Agent Collisions - Part C

While our agents now reserve their paths, this is done naively.

You see a lot of agents fail to find a solution, and thus not move (no agents actually occupy these locations!).

In the simulator, agents plan paths at each timestep anyways.

As such, we can avoid collisions by only checking next-step reservations.

Task: modify the expand() function to check for collisions only for the first step in your path.

Test and visualise your implementation - do things seem to improve?

Reserve paths, slightly better approach - SOLUTION

def plan(

env: MAPF.SharedEnvironment,

paths: list[list],

last_did_error: bool = False,

):

table.clear()

for i in range(len(paths)):

paths[i] = run_search(env, i)

# Check if we've got a solution

if paths[i]:

# Reserve only the first point on the path

table.reserve(interop.get_agent_state(env, i), paths[i][0])

return paths

Congrats on completing the second exercise!

You can now plan collision-free paths for multiple agents in a shared environment.

What's next?

-

Experiment with visualising various scenarios.

-

Think about how we can improve our existing systems

After the break, we will expand upon these ideas to bridge the gap to MAPF algorithms and lifelong planning.

Participating in Robot Runnersmini

Go to the contest server https://opss25.contest.pathfinding.ai/

Click Sign in with GitHub.

Participating in Robot Runnersmini

Authorise the app to access your profile.

Participating in Robot Runnersmini

We're going to create a submission repository.

Click Create repository.

Participating in Robot Runnersmini

Your repository should be created for you.

Click open repository.

Participating in Robot Runnersmini

You'll may get asked to accept an invitation to the repository.

Click Accept invitation.

Now you're free to push your code to this repository

Participating in Robot Runnersmini

You'll may get asked to accept an invitation to the repository.

Click Accept invitation.

Now you're free to push your code to this repository

Participating in Robot Runnersmini

When you push to that repository, it'll be available to be selected. Click Submit, and verify that it's queued up below.

Participating in Robot Runnersmini

Click on a submission to check out its details.